OpenAI shipped ChatGPT Images 2.0 on April 21, 2026, powered by a new model called gpt-image-2. Within 12 hours it took the #1 spot on every category of the Image Arena leaderboard by a +242 point margin - the largest lead ever recorded there. Three things actually matter for creators: real multilingual text rendering (Korean, Japanese, Arabic, Hindi all readable inside images), a thinking mode that plans layout and verifies its own output, and free access to the core quality jump. DALL-E 2 and 3 retire on May 12, 2026.

What is actually new

Per OpenAI's launch post (April 21, 2026), gpt-image-2 brings concrete upgrades across four dimensions:

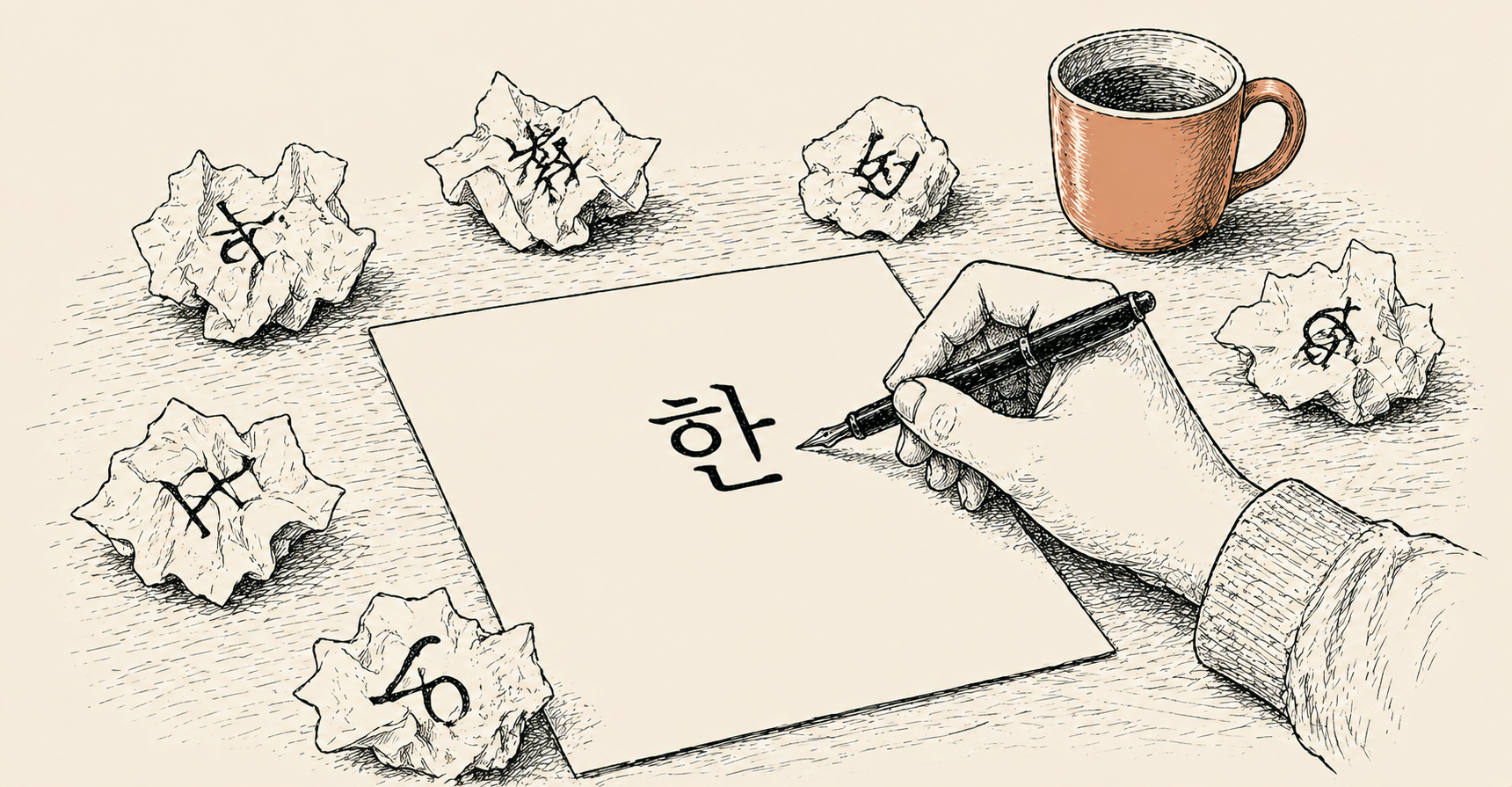

Korean, Japanese, Chinese, Hindi, Bengali, Arabic, Cyrillic, and Greek scripts now render legibly inside generated images. The broken-Hangul-on-thumbnail problem that plagued every prior image model is largely fixed for short headlines.

OpenAI first image model with native reasoning. It can plan layout, search the web for real-time references, generate up to 8 images from a single prompt, and double-check its own output before returning. Magazine-grade composition is now viable.

Up to 2K resolution. Native 9:16 (Reels and Shorts), 16:9 (YouTube thumbnails), 4:5 (Instagram), and square - no more upscaling square outputs and praying.

Instant Mode is open to every ChatGPT user, including the free tier. Thinking Mode (web search, multi-image batching, output verification) is gated to Plus ($20/mo), Pro ($200/mo), Business, and Enterprise. API access uses the same model.

Why this matters for creators outside the US

Until now, generating a thumbnail in Korean, Japanese, or Arabic meant one of two ugly workflows:

or use Midjourney for the background, then manually add text in a separate font tool

= 30-60 minutes per thumbnail

8 variations from a single prompt

= 1-2 minutes per thumbnail

The three creator workflows where this lands hardest:

- YouTube thumbnails in non-English markets - bold native-script text plus a high-contrast background, eight variants in one shot.

- Instagram and LinkedIn carousels - 10-card sets where typographic consistency across cards used to require a designer.

- TikTok and Reels static intros - 9:16 frames with overlaid native-script captions, generated in a single pass.

Pricing and access tiers

Different gates for different modes:

- Instant Mode (Free) - every signed-in user. Daily generation cap, no Thinking features.

- Thinking Mode (Plus, $20/mo) - web search, layout reasoning, 8-image batching, automatic output verification. Higher daily limits.

- Pro ($200/mo) - near-unlimited Thinking Mode plus priority compute.

- API - call directly via model ID gpt-image-2. Per-token and per-resolution pricing.

The same announcement confirmed that DALL-E 2 and DALL-E 3 will be retired on May 12, 2026. Any production pipeline still calling DALL-E endpoints needs to migrate within roughly three weeks.

- Multilingual rendering is not bulletproof yet. Short headlines hold up well; long sentences in Korean or Arabic can still produce occasional character distortion. Visually proof everything before publishing.

- Likeness and brand policies are unchanged. Generating real people faces or branded logos remains restricted. Source attribution stays the user responsibility.

How to migrate a DALL-E pipeline (3 weeks left)

If your automation stack still calls the DALL-E API, you have roughly three weeks before the May 12, 2026 sunset. Three things to plan for:

Swap the model ID - usually the smallest change.

Replace dall-e-3 or dall-e-2 in your model parameter with gpt-image-2. The response schema is largely compatible, so most downstream parsing code keeps working without modification. Run an integration test on a low-volume queue first.

Rewrite your prompts - DALL-E shorthand leaves quality on the table.

gpt-image-2 follows layout instructions far more literally than DALL-E did, which means terse prompts that worked before now under-specify the new model. For thumbnails with native-script text, name the text position, font weight, background contrast, and aspect ratio explicitly. Treat this as a one-time prompt audit, not a per-call concern.

Model the cost - resolution drives the bill.

Instant Mode pricing is roughly comparable to DALL-E 3 per image. Thinking Mode costs more because it consumes additional tokens for layout reasoning and verification. For pipelines generating 1,000+ images per month, a hybrid pattern works well: keep batch generation on Instant, escalate only headline thumbnails to Thinking. This caps cost while reserving the quality unlock for visuals where it matters.

OpenAI has not published a one-click migration tool, but the API surface is similar enough that most teams report a half-day port for typical pipelines. The longer tail of work is prompt rewriting, which scales with how many distinct visual templates a pipeline uses.

How to check if you have it

The fastest way to confirm access on your account:

- Open chat.openai.com and start a new chat

- Prompt: Generate 8 variants of a 16:9 YouTube thumbnail with bold red background and the headline AI Creator 2026

- If 8 images return at once, Thinking Mode is live (Plus or higher)

- If a single image returns, Instant Mode (free tier behavior)

- For API users, the model ID is gpt-image-2

- OpenAI launched ChatGPT Images 2.0 (gpt-image-2) on April 21, 2026

- Multilingual text rendering finally works inside images - Korean, Japanese, Chinese, Hindi, Arabic. Direct unlock for non-US creators.

- Instant Mode is free for everyone, Thinking Mode is Plus ($20/mo) or higher. 8-image batching, web search, and self-verification are Thinking-only.

- DALL-E 2 and 3 retire on May 12, 2026 - DALL-E API workflows need to migrate within ~3 weeks.

- OpenAI (April 21, 2026) - Introducing ChatGPT Images 2.0

- MacRumors (April 22, 2026) - OpenAI Launches ChatGPT Images 2.0 With Thinking Capabilities and Better Text Rendering

- PetaPixel (April 21, 2026) - OpenAI Claims ChatGPT Images 2.0 Can Think

- 9to5Mac (April 21, 2026) - OpenAI unveils ChatGPT Images 2 image-gen model capable of magazine design